In August 2020, we commissioned a global survey to gather views on change within the academic sector. The survey was sent to a random selection of 172,033 academics, librarians and students within Emerald’s literati community. A total of 1,274 literati from 188 countries responded.

The survey report covers attitudes to research impact evaluation, cultural challenges within academia, openness and transparency, and the role publishers can play in furthering change within the research ecosystem.

Use the 'On this page' and 'In the report' menus to quickly see and jump to content you're interested in, or scroll down to see what's here.

Impact evaluation

On this page, we reveal the findings of our Academic culture survey 2020 in relation to impact evaluation. Here we investigate how change ready we are as a sector to move beyond traditional impact metrics and embrace fairer and more meaningful research evaluation.

Key themes

There is a growing desire within the research community for a broader number of metrics and indicators to measure the quality of individual research contributions. As a signatory of DORA and in line with our Real Impact Manifesto to move beyond metrics and celebrate impact commitment, we have rolled out various initiatives to create awareness of the limitations of metrics and drive real impact.

One area we have focused attention is helping researchers demonstrate the influence of their research on practice, policy and society. In collaboration with industry experts, we are developing a suite of resources that will help researchers tell their impact story. Support materials that are readily available include an Impact Literacy Workbook and Institutional Healthcheck Workbook.

To guide our efforts to further research impact, we are continuously listening to the research community and probing further into the barriers to, and opportunities for, change. Our surveys and reports in these areas over the past three years have been an attempt to stimulate debate and bring conversations to the fore.

How change ready are we?

In this year’s survey, we found the desire for a broader impact metric had grown when compared to the previous year, with 20% of the research community calling for journal impact factors (JIFs) to be dropped all together, up from 13% in 2019. However, in terms of how research quality is measured at their institution, JIFs were perceived to play an important role – 71% selected JIFs as the way research quality is measured at their institution, up from 58% in 2019.

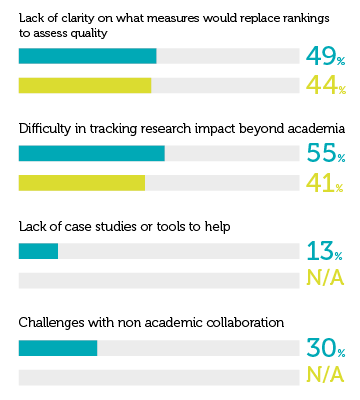

According to respondents, the biggest challenges to change include ‘Incentives for career progression still aligned to traditional impact metrics (i.e. publishing in ranked journals)’ (56%), closely followed by ‘Difficulty in tracking research impact beyond academia’ (55%), and ‘Lack of clarity on what measures would replace rankings to assess quality’ (49%).

Driving change

In terms of what individual researchers were willing to do to broaden the impact of their work and push for change, ‘Publishing open access and sharing links to supporting datasets to get more ‘eyeballs’ on my work’ came out on top, with just over half of researchers selecting this option. More opportunities for collaboration between industry and practice was believed to be the best way to make change happen, with 63% supporting this choice, up slightly from 2019.

On a scale of 1–10 where 1 is not at all important and 10 is very important, how important is demonstrating impact of research on society to... (average score out of 10)?

|

|

You personally | Your university | Funders | Policymakers | Society |

|---|---|---|---|---|---|

| Overall 2020 | 8 | 8 | 8 | 8 | 8 |

| Overall 2019 | 8 | 8 | 8 | 7 | 7 |

| Overall 2018 | 8 | 7 | 8 | 7 | 7 |

How is the quality of your research impact currently measured (percentage time chosen in top 3)?

|

|

Journal citations and impact factors | Tenure or career advancement | Funding opportunities | Measurable change in practice, policy or behaviour | Provable effects of research in the real world | Improved societal, health, economic or environmental outcomes | Mobilised knowledge that affects decision-making in applied settings | Other (including bottom 3 chosen options) |

|---|---|---|---|---|---|---|---|---|

| Overall 2020 | 71% | 33% | 31% | 24% | 23% | 19% | 18% | 35% |

| Overall 2019 | 58% | 30% | 35% | 59% | 59% | 61% | 49% | N/A |

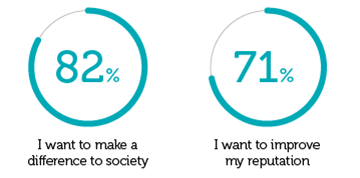

On a scale of 1 to 5, where 1 is not at all important and 5 is very important, how important are the following factors in helping achieve broader impact with your work (net important, scored 4–5)?

|

|

I want to make a difference to society | I want to improve my reputation | I want to advance my career | I want to increase funding opportunities | I need to meet institutional or funder requirements |

|---|---|---|---|---|---|

| Overall | 82% | 71% | 67% | 61% | 60% |

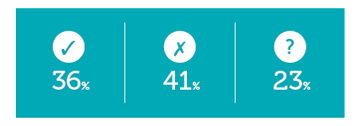

Do you expect the priority of measuring real-world impact to change in your institution in the next 12 to 18 months?

|

|

Yes | No | I don't know |

|---|---|---|---|

| Overall 2020 | 36% | 41% | 23% |

| Overall 2019 | 40% | 28% | 32% |

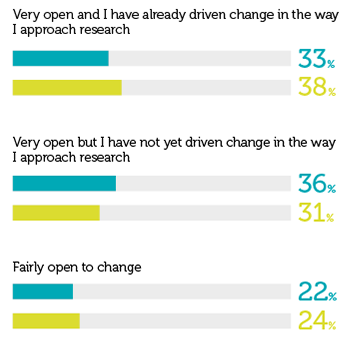

How strongly do you support the idea of changing the way research impact is measured?

|

|

Very open and I have already driven change in the way I approach research | Very open but I have not yet driven change in the way I approach research | Fairly open to change | Neither open to change nor against it | Fairly against change | Not at all open |

|---|---|---|---|---|---|---|

| Overall 2020 | 33% | 36% | 22% | 7% | 2% | 1% |

| Overall 2019 | 38% | 31% | 24% | 5% | 1% | 1% |

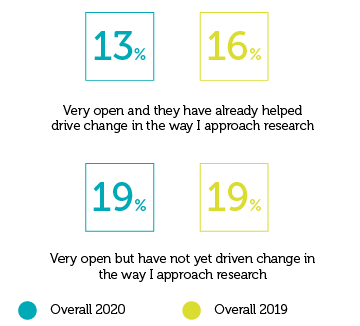

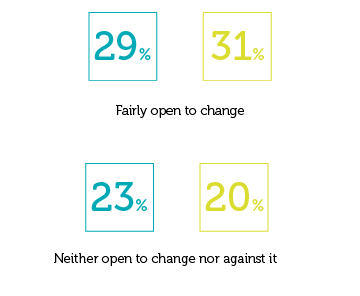

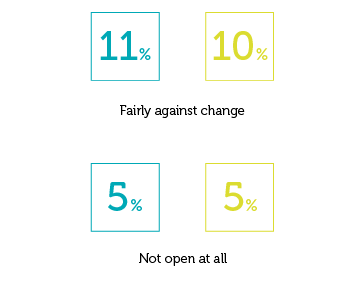

How supportive / interested are those in your broader institution in driving change when it comes to other ways to measure research impact?

|

|

Very open and they have already driven change in the way I approach research | Very open but have not yet driven change in the way I approach research | Fairly open to change | Neither open to change nor against it | Fairly against change | Not at all open |

|---|---|---|---|---|---|---|

| Overall 2020 | 13% | 19% | 29% | 23% | 11% | 5% |

| Overall 2019 | 16% | 19% | 30% | 20% | 10% | 5% |

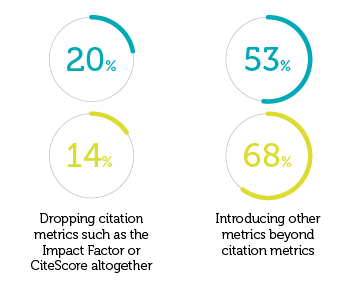

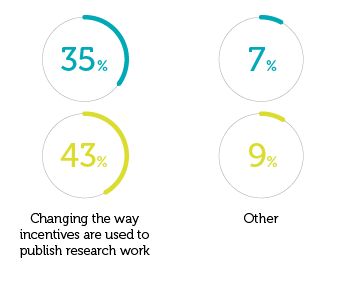

What main change would you like to see in the way research quality is measured?

|

|

I want to make a difference to society | I want to improve my reputation | I want to advance my career | I want to increase funding opportunities |

|---|---|---|---|---|

| Overall 2020 | 20% | 53% | 35% | 7% |

| Overall 2019 | 14% | 68% | 43% | 9% |

Which of the following do you consider to be the biggest 'challenges' of changing the way research impact is assessed?

|

|

Incentives for career progression still aligned to traditional impact metrics | Organisation resistant to change / entrenched culture | Lack of funding for open research | Regional drivers (discrepancies around impact ‘readiness’) | Lack of clarity on what measures would replace rankings to assess quality | Difficulty in tracking research impact beyond academia | Lack of case studies or tools to help | Lack of case studies or tools to help Challenges with non academic collaboration |

|---|---|---|---|---|---|---|---|---|

| Overall 2020 | 56% | 47% | 39% | 14% | 49% | 55% | 13% | 30% |

| Overall 2019 | 60% | 34% | 26% | 9% | 44% | 41% | n/a | n/a |

When it comes to your research, what types of change would you consider implementing?

|

|

Publishing non-traditional content (short form, policy notes, blogs etc.) if the rewards mechanisms for this were in place | Publishing non-traditional content (short form, policy notes, blogs etc.) if the rewards mechanisms for this were in place | Publishing open access and sharing links to supporting datasets to get more ‘eyeballs’ on my work | Saving published work to my institutional repository (green open access) | Publishing my research with a publisher that auto-deposits my Author's Accepted Manuscript (AAM) on my behalf | I’m not considering changing my processes / methodologies | I would like to make these changes, but feel unable to do so due to my institution | Something else |

|---|---|---|---|---|---|---|---|---|

| Overall 2020 | 46% | 44% | 51% | 34% | 28% | 10% | 22% | 5% |

| Overall 2019 | 48% | 51% | 29% | 24% | n/a | 9% | n/a | 4% |

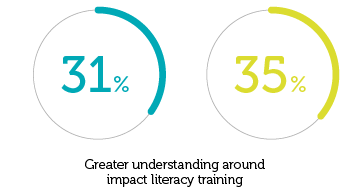

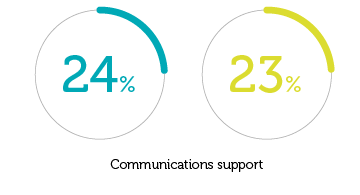

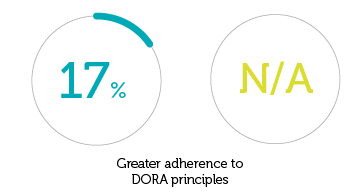

In your opinion what are the best way(s) to enable change to happen (percentage of times chosen in top 3)?

|

|

Greater understanding around impact literacy training | More opportunities for collaboration between industry and practice | Communications support | Greater adherence to DORA principles | More publishers making research open access | More opportunities to debate the issues in a public forum | Other |

|---|---|---|---|---|---|---|---|

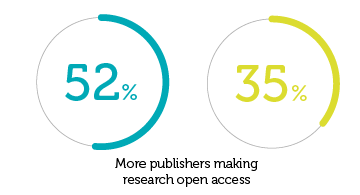

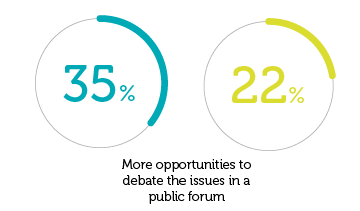

| Overall 2020 | 31% | 63% | 24% | 17% | 52% | 35% | 8% |

| Overall 2019 | 35% | 61% | 23% | n/a | 35% | 22% | 7% |

Your words & comments

Under pressure

When asked, ‘What main change would you like to see in the way research quality is measured?’, suggestions included:

Quality over quantity: “Pay attention to the quality of a researcher’s work, rather than quantity of research. Not all research should be equally weighted. Philosophical refection takes time. Yet, quantitative work can have quick outcomes. But their impacts are different. Current evaluation drives most researchers to do quick work, especially when they have heavy teaching load”

Female teacher, India

Changes to incentives: “Changing the incentive structure for the career and performance evaluation beyond the publication and impact factors”

The Emerald view

Supporting research that can make a real difference is crucial to our progress on global issues such as climate change and poverty, says Tony Roche, Executive Vice President of Publishing and Strategic Relationships at Emerald. In this context, he calls for the development and recognition of a broader range of research evaluation metrics, in addition to narratives that support the impact journey.

![]()

Drawing on our latest survey, it is encouraging to see researchers, institutions, funders and policy makers placing greater emphasis on the societal impact of research. While this is now widely accepted in principle, poorly aligned evaluation and incentive structures are clearly blocking these aspirations. Bibliometric indicators and citations have a role to play, and academic rigour need not be sacrificed for research to better connect with real world impact.

There has been positive movement in some national evaluation systems, with open routes of dissemination increasingly preferred, however the participation of the intended beneficiaries in society is still limited, and mechanisms to mobilise knowledge remain poorly developed.

This year’s survey also highlights cultural challenges within research that must be addressed through the research evaluation process as driver of change, to incentivise responsible research practices for the benefit of all.

Giving voice to the underrepresented

Emerald is committed to action through co-creation, to bring the voice of the beneficiary as well as underrepresented researchers themselves more directly into the research and publication process, and we will hold ourselves to account to measure progress here.

As a participant within the global research and scholarly comms ecosystem, we work with over 30,000 researchers each year, and through our own commitments to diversity and inclusion we can ensure that the research we publish is more representative and reflective of the needs of society.

Supporting research impact

Our commitments extend to working with policy makers and funders, so that a wider array of indicators and metrics, as well as the narratives to support the impact journey, are developed and recognised through evaluation processes themselves. This clearly requires coordinated efforts and a willingness to work together, so that research can perform better in its critical underpinning role to support societal progress in areas such as climate change mitigation, environmental degradation, poverty and illiteracy.